On *nix-based servers, mod_rewrite can be a powerful tool in any web monkey’s arsenal, however it still voodoo to many, while others may not even be aware that it can help them at all.

What is Mod_Rewrite?

Simply put, mod_rewrite is an Apache module that let’s you rewrite urls based on rules you define. That’s it. Seriously.

Regardless of how confusing some of the rules you may have come across appear to be, all they are doing is taking one url and rewriting as a different url. This rewriting happens at the server level, before the user’s browser sees anything, so the end result is seamless to them.

When you hear about “search engine friendly” urls, you’re most often seeing mod_rewrite in action. Mod_rewrite is the Apache module that let’s you turn a url like:

http://www.example.com/shop.php?category=Books&Title=Foo

into:

http://www.example.com/shop/books/foo.html

Some other common uses for mod_rewrite:

- Directing all traffic from multiple domain names to one domain

- Directing all traffic from www and non-www to one location

- Blocking traffic from specific websites

- Blocking spammy searchbots and offline browsers from spidering your site and eating your bandwidth

- Mask file extensions

- Preventing image hotlinking (other web pages linking to images on your server)

Apache’s mod_rewrite can be intimidating if you start where you’re supposed to start – the Apache documentation, however there are some very useful, common – and simple rewrite rules that you may wish to consider implementing into your site development plan, if you’re not doing so already.

Note: If you’re using Microsoft IIS, you have a few options, but I don’t use IIS, so I’m afraid I won’t be of much help to you beyond telling you where to look. ISAPI ReWrite seems to be very popular, and there is a free “lite” version available.

Getting Started

Your mod_rewrite rules typically live in an .htaccess file in your web root. You can only have one .htaccess per directory, but you can have individual .htaccess files in sub-directories under the web root. I generally do not recommend doing this. If mod_rewrite rules from one .htaccess conflict with the rules from the .htaccess in a sub-directory, it can be a real pain in the ass to troubleshoot. Try to avoid it.

When you’re adding mod_rewrite rules to your .htaccess file, you’ll want to start by using a conditional that checks to see if mod_rewrite is installed on your server. This can prevent getting a 500 Internal Server Error if you don’t.

[source=’c#’]# Start your (rewrite) engines…

RewriteEngine On

# rules and conditions go here…

Directing Multiple Domain Names to a Single Domain Url

If you have multiple domain names pointing to the same site, mod_rewrite can also help you direct all traffic to a single domain url, to improve your search engine rankings. Search engines don’t take too kindly to the same content living at multiple urls – they usually think its an attempt to spam the search engine – and you can actually be penalized for it. To redirect all traffic to one specific domain name,

[source=’css’]RewriteCond %{HTTP_HOST} !^www\.snipe\.net$ [NC] RewriteRule ^(.*)$ http://www.snipe.net/$1 [R=301][/source]This basically says “if the domain requested (the HTTP_HOST) does not match www.snipe.net then rewrite the url as www.snipe.net“. (Note the escaping slashes after the www and before the .net in the condition.) The R=301 specifies that the redirect should be a 301 redirect, meaning that the address has moved permanently and search engines should use the new url instead of the old one.

To www or not to www

Even if you have only one domain name, if you’re not redirecting traffic from the “www” version to the “non-www” version (or vice versa), you may encounter this problem. Whether or not you choose to use the www in your url is largely a branding decision more than anything else (i.e. it doesn’t really matter in most cases) – but you should pick one and stick with it.

Require (force) the www

[source=’css’]RewriteCond %{HTTP_HOST} !^www\.snipe\.net$ [NC] RewriteRule ^(.*)$ http://www.snipe.net/$1 [R=301,L][/source]Remove the www

[source=’css’]RewriteCond %{HTTP_HOST} !^snipe\.net$ [NC] RewriteRule ^(.*)$ http://snipe.net/$1 [R=301,L][/source]Deny traffic by referrer

There may be a few reasons why you want to block traffic by referrer. Maybe you’re getting a lot of bandwidth-sucking hits from a spammy website – or maybe someone is linking to you that you feel does not represent you very well, and you want to pull the plug on traffic from coming their site.

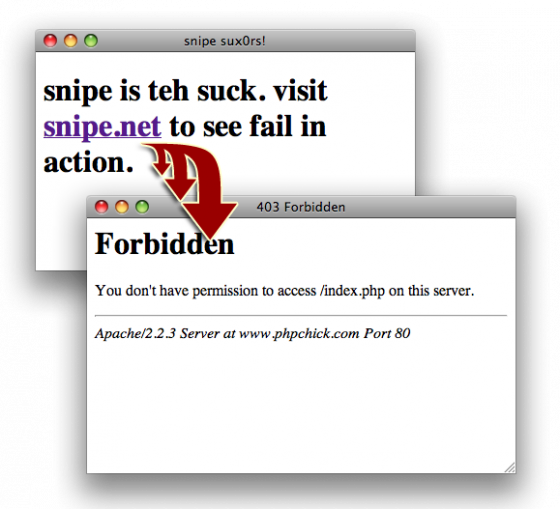

[source=’css’]RewriteCond %{HTTP_REFERER} onebadsite\.com [NC,OR] RewriteCond %{HTTP_REFERER} anotherbadsite\.com [NC] RewriteRule .* – [F,L][/source]In this snippet, the rule is saying “If the referring url is onebadsite.com OR anotherbadsite.com, redirect the user to an HTTP Forbidden error.” The NC specifies that the condition is not case-sensitive, and the OR flag is… well… an “or”. OR is used with multiple RewriteCond directives to combine them with OR instead of the implicit AND.

Keep in mind – this method of blocking traffic is hardly foolproof, at least in the latter of the two scenarios above. If the webmaster of onebadsite.com is linking to you in a way or context you do not want (and you’ve asked them to remove the link), the above method will cause a user on onebadsite.com’s website who has clicked on the link to you from onebadsite.com to hit a Forbidden error. If that user has half a brain, they may very well just google your site name or try to access it later from a bookmark – but it’s a simple measure you can take to keep the idjits out.

Blocking Bad Bots and Spiders

While there is some potential debate as to what is a “bad” bot or spider, the consensus seems to that a bot is bad if they do more harm than good, such as e-mail harvesters, site rippers that download entire websites for offline browsing, etc. Even if bandwidth isn’t so much an issue, I like to block these just on principle.

Please note – this list is not mine – it was directly nicked from a list on JavascriptKit.

[source=’css’]RewriteCond %{HTTP_USER_AGENT} ^BlackWidow [OR] RewriteCond %{HTTP_USER_AGENT} ^Bot\ mailto:craftbot@yahoo.com [OR] RewriteCond %{HTTP_USER_AGENT} ^ChinaClaw [OR] RewriteCond %{HTTP_USER_AGENT} ^Custo [OR] RewriteCond %{HTTP_USER_AGENT} ^DISCo [OR] RewriteCond %{HTTP_USER_AGENT} ^Download\ Demon [OR] RewriteCond %{HTTP_USER_AGENT} ^eCatch [OR] RewriteCond %{HTTP_USER_AGENT} ^EirGrabber [OR] RewriteCond %{HTTP_USER_AGENT} ^EmailSiphon [OR] RewriteCond %{HTTP_USER_AGENT} ^EmailWolf [OR] RewriteCond %{HTTP_USER_AGENT} ^Express\ WebPictures [OR] RewriteCond %{HTTP_USER_AGENT} ^ExtractorPro [OR] RewriteCond %{HTTP_USER_AGENT} ^EyeNetIE [OR] RewriteCond %{HTTP_USER_AGENT} ^FlashGet [OR] RewriteCond %{HTTP_USER_AGENT} ^GetRight [OR] RewriteCond %{HTTP_USER_AGENT} ^GetWeb! [OR] RewriteCond %{HTTP_USER_AGENT} ^Go!Zilla [OR] RewriteCond %{HTTP_USER_AGENT} ^Go-Ahead-Got-It [OR] RewriteCond %{HTTP_USER_AGENT} ^GrabNet [OR] RewriteCond %{HTTP_USER_AGENT} ^Grafula [OR] RewriteCond %{HTTP_USER_AGENT} ^HMView [OR] RewriteCond %{HTTP_USER_AGENT} HTTrack [NC,OR] RewriteCond %{HTTP_USER_AGENT} ^Image\ Stripper [OR] RewriteCond %{HTTP_USER_AGENT} ^Image\ Sucker [OR] RewriteCond %{HTTP_USER_AGENT} Indy\ Library [NC,OR] RewriteCond %{HTTP_USER_AGENT} ^InterGET [OR] RewriteCond %{HTTP_USER_AGENT} ^Internet\ Ninja [OR] RewriteCond %{HTTP_USER_AGENT} ^JetCar [OR] RewriteCond %{HTTP_USER_AGENT} ^JOC\ Web\ Spider [OR] RewriteCond %{HTTP_USER_AGENT} ^larbin [OR] RewriteCond %{HTTP_USER_AGENT} ^LeechFTP [OR] RewriteCond %{HTTP_USER_AGENT} ^Mass\ Downloader [OR] RewriteCond %{HTTP_USER_AGENT} ^MIDown\ tool [OR] RewriteCond %{HTTP_USER_AGENT} ^Mister\ PiX [OR] RewriteCond %{HTTP_USER_AGENT} ^Navroad [OR] RewriteCond %{HTTP_USER_AGENT} ^NearSite [OR] RewriteCond %{HTTP_USER_AGENT} ^NetAnts [OR] RewriteCond %{HTTP_USER_AGENT} ^NetSpider [OR] RewriteCond %{HTTP_USER_AGENT} ^Net\ Vampire [OR] RewriteCond %{HTTP_USER_AGENT} ^NetZIP [OR] RewriteCond %{HTTP_USER_AGENT} ^Octopus [OR] RewriteCond %{HTTP_USER_AGENT} ^Offline\ Explorer [OR] RewriteCond %{HTTP_USER_AGENT} ^Offline\ Navigator [OR] RewriteCond %{HTTP_USER_AGENT} ^PageGrabber [OR] RewriteCond %{HTTP_USER_AGENT} ^Papa\ Foto [OR] RewriteCond %{HTTP_USER_AGENT} ^pavuk [OR] RewriteCond %{HTTP_USER_AGENT} ^pcBrowser [OR] RewriteCond %{HTTP_USER_AGENT} ^RealDownload [OR] RewriteCond %{HTTP_USER_AGENT} ^ReGet [OR] RewriteCond %{HTTP_USER_AGENT} ^SiteSnagger [OR] RewriteCond %{HTTP_USER_AGENT} ^SmartDownload [OR] RewriteCond %{HTTP_USER_AGENT} ^SuperBot [OR] RewriteCond %{HTTP_USER_AGENT} ^SuperHTTP [OR] RewriteCond %{HTTP_USER_AGENT} ^Surfbot [OR] RewriteCond %{HTTP_USER_AGENT} ^tAkeOut [OR] RewriteCond %{HTTP_USER_AGENT} ^Teleport\ Pro [OR] RewriteCond %{HTTP_USER_AGENT} ^VoidEYE [OR] RewriteCond %{HTTP_USER_AGENT} ^Web\ Image\ Collector [OR] RewriteCond %{HTTP_USER_AGENT} ^Web\ Sucker [OR] RewriteCond %{HTTP_USER_AGENT} ^WebAuto [OR] RewriteCond %{HTTP_USER_AGENT} ^WebCopier [OR] RewriteCond %{HTTP_USER_AGENT} ^WebFetch [OR] RewriteCond %{HTTP_USER_AGENT} ^WebGo\ IS [OR] RewriteCond %{HTTP_USER_AGENT} ^WebLeacher [OR] RewriteCond %{HTTP_USER_AGENT} ^WebReaper [OR] RewriteCond %{HTTP_USER_AGENT} ^WebSauger [OR] RewriteCond %{HTTP_USER_AGENT} ^Website\ eXtractor [OR] RewriteCond %{HTTP_USER_AGENT} ^Website\ Quester [OR] RewriteCond %{HTTP_USER_AGENT} ^WebStripper [OR] RewriteCond %{HTTP_USER_AGENT} ^WebWhacker [OR] RewriteCond %{HTTP_USER_AGENT} ^WebZIP [OR] RewriteCond %{HTTP_USER_AGENT} ^Wget [OR] RewriteCond %{HTTP_USER_AGENT} ^Widow [OR] RewriteCond %{HTTP_USER_AGENT} ^WWWOFFLE [OR] RewriteCond %{HTTP_USER_AGENT} ^Xaldon\ WebSpider [OR] RewriteCond %{HTTP_USER_AGENT} ^ZeusRewriteRule ^.* – [F,L][/source]

Once again, this method isn’t foolproof. The HTTP_USER_AGENT is quite easily spoofed, and some site ripping applications even allow you to specify what user agent you want to appear as. But if your site is large, implementing this list may make a significant impact on your monthly bandwidth bill.

Mask File Extensions

If for some reason you want to hide the fact that you’re using PHP (or Perl, or whatever), all it takes is a simple line in your .htaccess to have your .php files look like .html files:

[source=’css’]RewriteRule ^(.*)\.html$ $1.php [R=301,L] [/source]You could even completely obfuscate it if you wanted to, for example serving files that end in .snipe that are really .php files:

[source=’css’]RewriteRule ^(.*)\.snipe$ $1.php [R=301,L] [/source]In these examples, redirects all files that end in .html (or .snipe) to be served from filename.php so it looks like all your pages are .html (or .snipe) but really they are .php. Notice again that we’re using a 301 redirect.

Prevent Image/File Hotlinking

This snippets prevents people from hotlinking to your files – that is, linking directly to files hosted on your server from their website, thus sucking your bandwidth. It should be noted that in my experience, this rewrite rule seems somewhat spotty, and doesn’t always work, so be sure to test thoroughly.

[source=’css’]RewriteCond %{HTTP_REFERER} !^$RewriteCond %{HTTP_REFERER} !^http://(www\.)?snipe.net/.*$ [NC] RewriteRule \.(gif|jpg|swf|flv|png)$ /images/dont_steal_bandwidth_jackass.png [R=302,L] [/source]

This rule basically says “If the request’s referrer is not blank (meaning the file was accessed directly in a browser) AND is not snipe.net (case insensitive), rewrite any files that end in .gif, .jpg, .swf, .flv or .png to display the file /images/dont_steal_bandwidth_jackass.png.

I have a friend who was trolling through their server logs (as he tended to do) and he realized that someone was using an image from his server, a Debian logo, if I recall correctly – as their MySpace background image. My friend spicyjack, being of the snarky persuasion, set up a leech=prevention mod_rewrite that directed all requests for that image that were not coming FROM his server to an image that was… shall we say…. not something you’d want as the background image of your MySpace page. (Google “hotcurry.jpg” if you’re really curious. It’s NSFW. Or anything, or that matter.)

Search Engine Friendly URLS – Make Dynamic Pages appear Static

To turn site.com/index.php?category=foo&subcat=bar into site.com/category-foo/bar.html, just use:

[source=’css’]RewriteRule ^category-([0-9]+)/([0-9A-Za-z]+).html index.php?category=$1&subcat=$2 [/source]The category number and the subcategory name are variables, these are now represented by $1 and $2. The round brackets and the stuff inside them will be replaced by the variables. The things inside the round brackets are the regex rules for what the variable can contain. Example [0-9] means that it can contain any number from 0 to 9 and the + sign means that the number can be repeated 1 or more times.

The [L]ast Word

The [L] flag tells mod-rewrite that no more rules should be processed after that. Also remember that the order these rules are in DOES matter, so you’ll want to consider their intended behavior carefully when you’re planning your .htaccess. If at all possible, have your Apache error logs accessible while experimenting. when mod_rewrite goes wrong, it very often gives you a generic (and infuriating) “500 Internal Server Error” instead of anything actually useful to you – the Apache error logs may help shed some light on things.

Also be sure to test thoroughly whenever using mod_rewrite, since you can seriously break stuff if you’re not careful.

More Resources

Can’t get enough mod_rewrite? Check out these links for additional information and tips.

And if you’ve got a handy mod_rewrite rule you can’t live without, let us know in the comments.